大数据开发中的元数据:从基础到高级应用的全面指南

在大数据开发中,元数据(Metadata)是指描述数据的数据。元数据可以提供有关数据结构、数据类型、数据约束和数据关系的重要信息。合理利用元数据可以显著提高数据建模和管理的效率。本文将详细介绍如何根据元数据建表,并提供一些代码示例来说明具体操作。

目录

什么是元数据?

元数据是关于数据的信息,描述了数据的结构、含义、属性及其相互关系。在大数据系统中,元数据可以帮助我们理解数据来源、数据类型、数据的约束条件等。常见的元数据包括:

- 数据库名

- 表名

- 字段名

- 数据类型

- 字段长度

- 是否为空

- 主键和外键约束

1. 根据元数据建表的步骤

1.1 收集元数据

首先,我们需要收集关于数据的元数据。元数据可以来自于多种来源,例如手动编写的文档、数据字典、数据治理工具等。下面是一个简单的元数据示例:

{

"database": "example_db",

"table": "users",

"columns": [

{"name": "id", "type": "INT", "length": 11, "nullable": false, "primary_key": true},

{"name": "name", "type": "VARCHAR", "length": 255, "nullable": false},

{"name": "email", "type": "VARCHAR", "length": 255, "nullable": false, "unique": true},

{"name": "created_at", "type": "TIMESTAMP", "nullable": false}

]

}

1.2 生成建表语句

根据收集到的元数据,我们可以生成 SQL 建表语句。下面是一个 Python 代码示例,用于根据元数据生成建表语句:

def generate_create_table_sql(metadata):

table_name = metadata["table"]

columns = metadata["columns"]

column_definitions = []

primary_keys = []

for column in columns:

column_def = f'{column["name"]} {column["type"]}'

if "length" in column:

column_def += f'({column["length"]})'

if not column.get("nullable", True):

column_def += " NOT NULL"

if column.get("primary_key", False):

primary_keys.append(column["name"])

if column.get("unique", False):

column_def += " UNIQUE"

column_definitions.append(column_def)

primary_key_def = ""

if primary_keys:

primary_key_def = f', PRIMARY KEY ({", ".join(primary_keys)})'

create_table_sql = f'CREATE TABLE {table_name} (\n ' + ',\n '.join(column_definitions) + primary_key_def + '\n);'

return create_table_sql

metadata = {

"database": "example_db",

"table": "users",

"columns": [

{"name": "id", "type": "INT", "length": 11, "nullable": false, "primary_key": true},

{"name": "name", "type": "VARCHAR", "length": 255, "nullable": false},

{"name": "email", "type": "VARCHAR", "length": 255, "nullable": false, "unique": true},

{"name": "created_at", "type": "TIMESTAMP", "nullable": false}

]

}

create_table_sql = generate_create_table_sql(metadata)

print(create_table_sql)

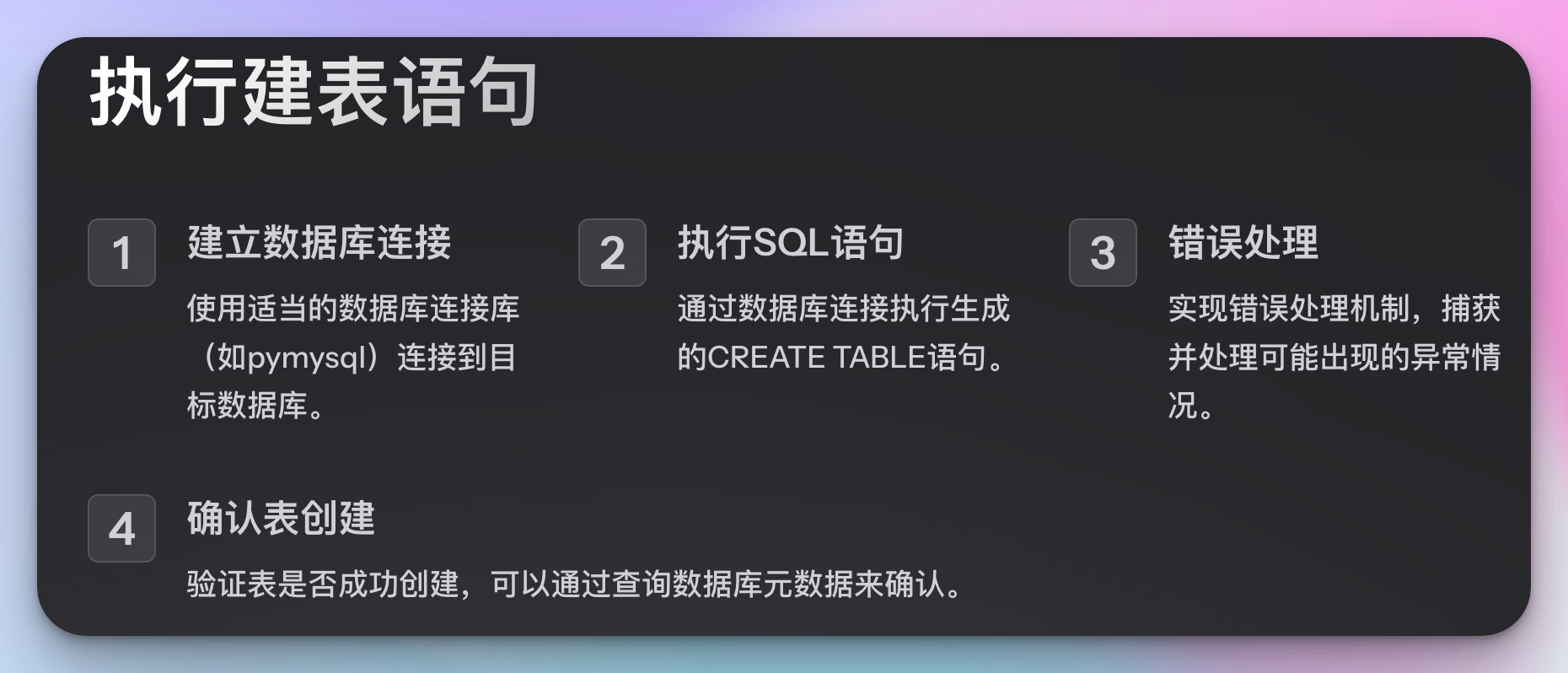

1.3 执行建表语句

生成建表语句后,我们需要在数据库中执行这些语句以创建相应的表。可以使用数据库连接库(例如 pymysql、psycopg2 等)来执行 SQL 语句:

import pymysql

def execute_create_table(sql, database):

connection = pymysql.connect(

host='localhost',

user='root',

password='password',

database=database

)

try:

with connection.cursor() as cursor:

cursor.execute(sql)

connection.commit()

finally:

connection.close()

execute_create_table(create_table_sql, metadata["database"])

示例

假设我们有以下元数据描述:

{

"database": "example_db",

"table": "orders",

"columns": [

{"name": "order_id", "type": "INT", "length": 11, "nullable": false, "primary_key": true},

{"name": "user_id", "type": "INT", "length": 11, "nullable": false},

{"name": "product_id", "type": "INT", "length": 11, "nullable": false},

{"name": "quantity", "type": "INT", "length": 11, "nullable": false},

{"name": "order_date", "type": "TIMESTAMP", "nullable": false}

]

}

通过上面的代码,我们可以生成并执行以下 SQL 语句来创建 orders 表:

CREATE TABLE orders (

order_id INT(11) NOT NULL,

user_id INT(11) NOT NULL,

product_id INT(11) NOT NULL,

quantity INT(11) NOT NULL,

order_date TIMESTAMP NOT NULL,

PRIMARY KEY (order_id)

);

2. 元数据驱动的数据管理

除了建表,元数据还可以用于其他数据管理任务,如数据迁移、数据同步、数据校验等。通过统一管理和使用元数据,可以显著简化这些任务的实现过程。

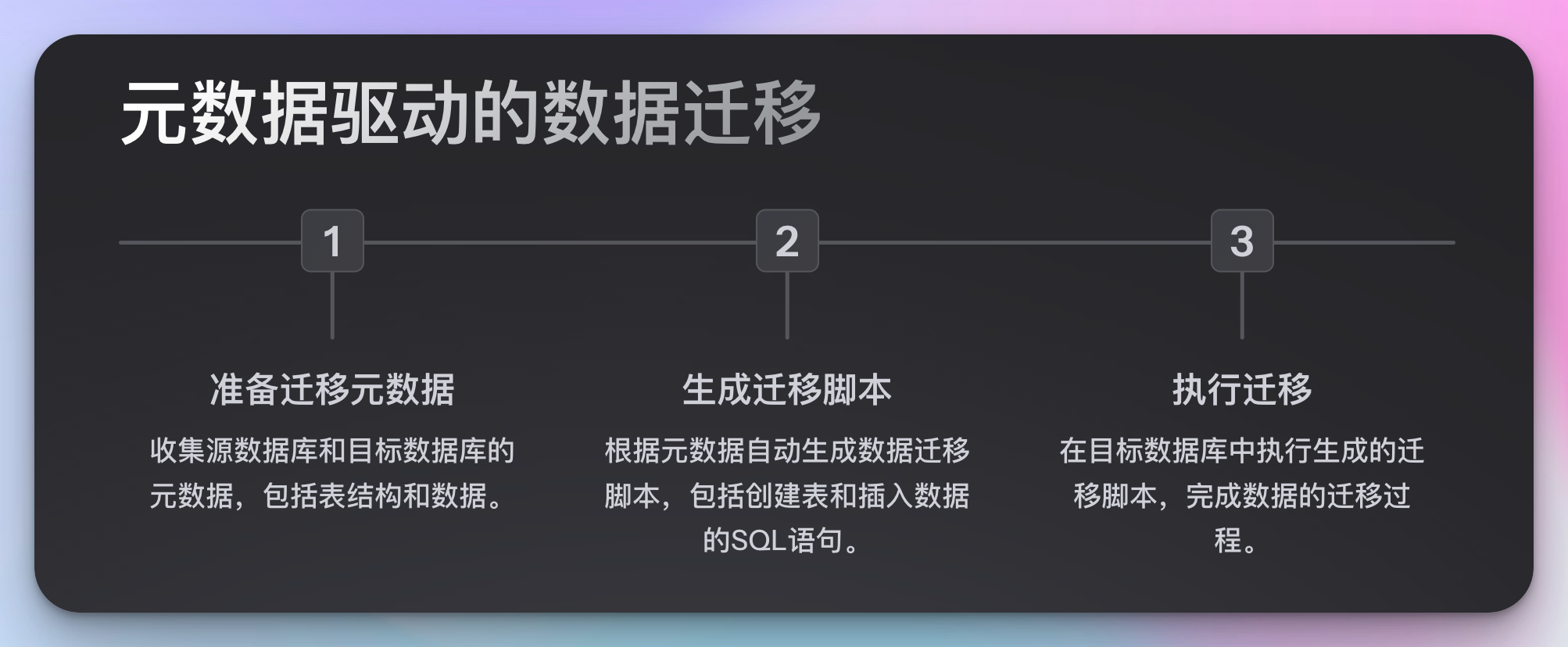

数据迁移

数据迁移是指将数据从一个系统或存储位置移动到另一个系统或存储位置的过程。通过元数据,我们可以自动生成迁移脚本,从而简化迁移过程。以下是一个示例:

假设我们需要将 example_db 数据库中的所有表和数据迁移到另一个数据库 new_db,可以使用以下 Python 代码生成迁移脚本:

def generate_migration_script(metadata):

old_database = metadata["old_database"]

new_database = metadata["new_database"]

tables = metadata["tables"]

script = f'-- Migration script from {old_database} to {new_database}\n'

for table in tables:

script += f'\n-- Migrate table {table["table"]}\n'

script += f'CREATE TABLE {new_database}.{table["table"]} LIKE {old_database}.{table["table"]};\n'

script += f'INSERT INTO {new_database}.{table["table"]} SELECT * FROM {old_database}.{table["table"]};\n'

return script

migration_metadata = {

"old_database": "example_db",

"new_database": "new_db",

"tables": [

{"table": "users"},

{"table": "orders"}

]

}

migration_script = generate_migration_script(migration_metadata)

print(migration_script)

输出的脚本如下:

-- Migration script from example_db to new_db

-- Migrate table users

CREATE TABLE new_db.users LIKE example_db.users;

INSERT INTO new_db.users SELECT * FROM example_db.users;

-- Migrate table orders

CREATE TABLE new_db.orders LIKE example_db.orders;

INSERT INTO new_db.orders SELECT * FROM example_db.orders;

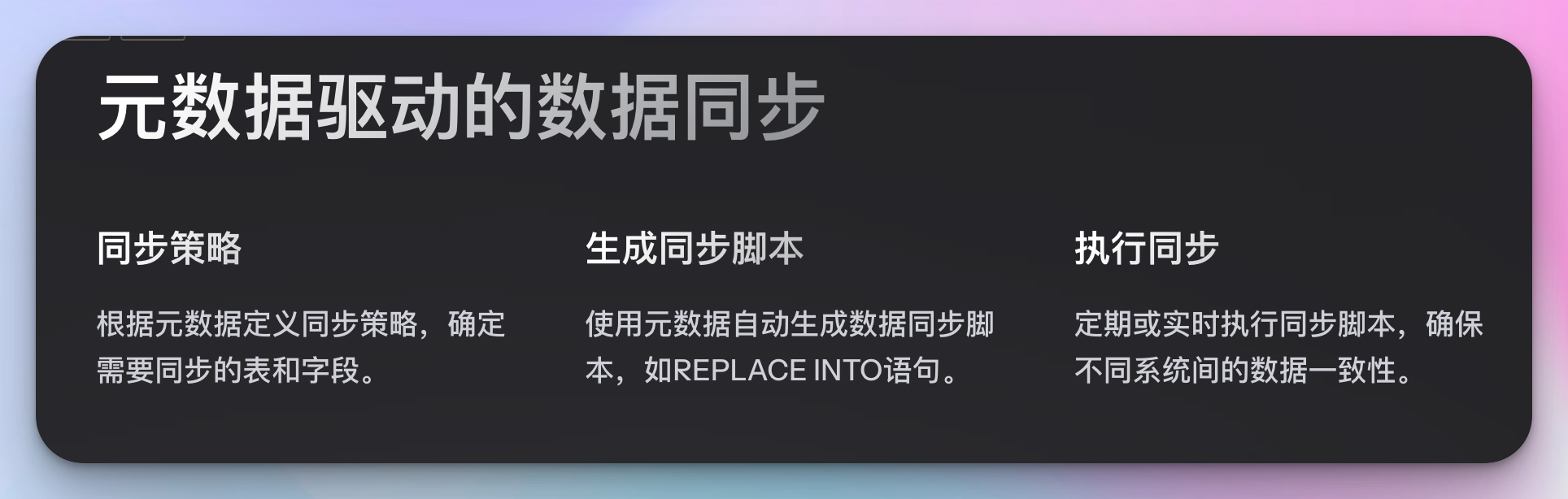

数据同步

数据同步是确保不同系统或存储位置中的数据保持一致的过程。元数据可以帮助我们确定哪些表和字段需要同步,以及如何处理冲突。以下是一个简单的示例,使用元数据生成数据同步脚本:

def generate_sync_script(metadata):

source_database = metadata["source_database"]

target_database = metadata["target_database"]

tables = metadata["tables"]

script = f'-- Sync script from {source_database} to {target_database}\n'

for table in tables:

script += f'\n-- Sync table {table["table"]}\n'

script += f'REPLACE INTO {target_database}.{table["table"]} SELECT * FROM {source_database}.{table["table"]};\n'

return script

sync_metadata = {

"source_database": "example_db",

"target_database": "sync_db",

"tables": [

{"table": "users"},

{"table": "orders"}

]

}

sync_script = generate_sync_script(sync_metadata)

print(sync_script)

输出的脚本如下:

-- Sync script from example_db to sync_db

-- Sync table users

REPLACE INTO sync_db.users SELECT * FROM example_db.users;

-- Sync table orders

REPLACE INTO sync_db.orders SELECT * FROM example_db.orders;

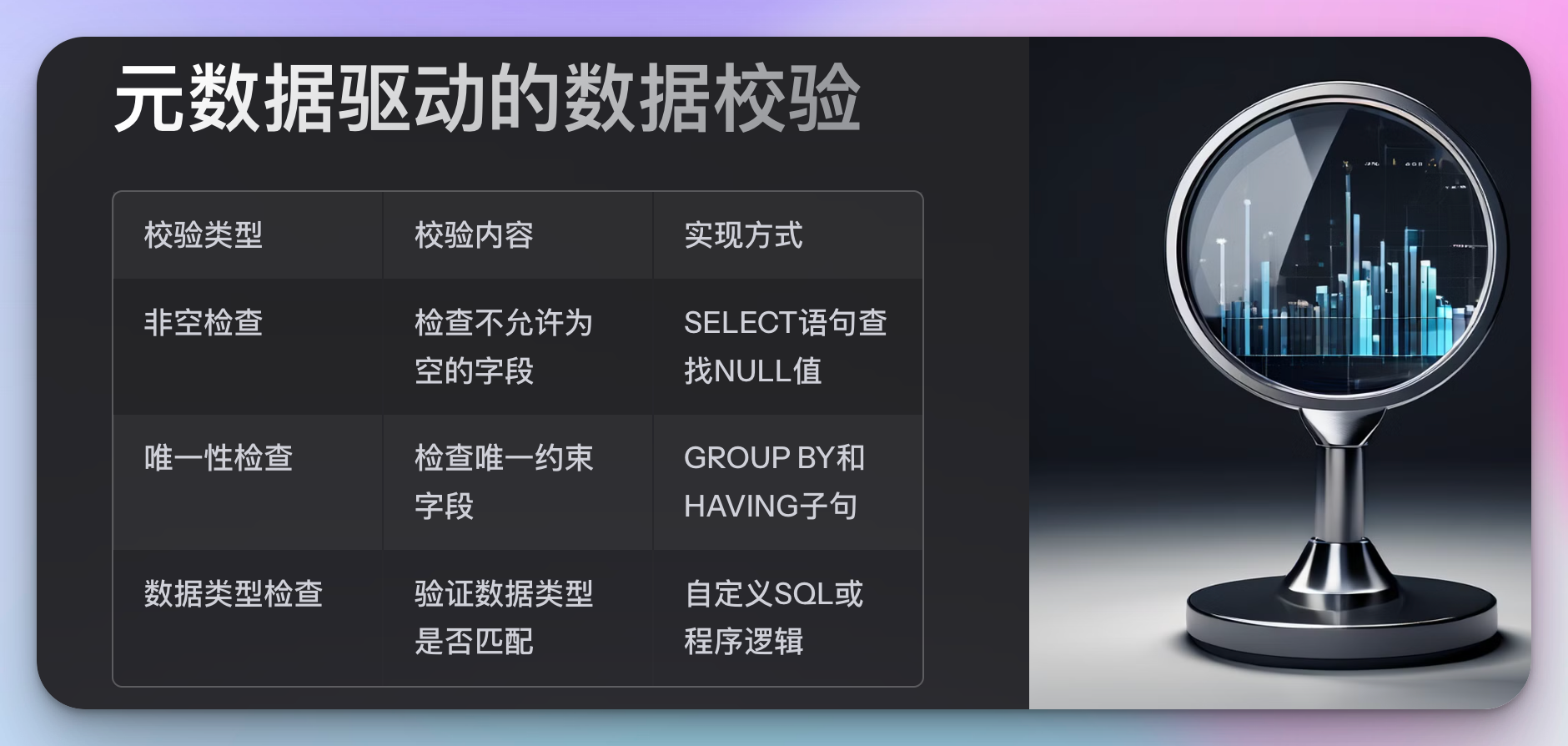

数据校验

数据校验是指验证数据是否符合预期的过程。通过元数据,我们可以自动生成校验规则,并据此进行数据校验。以下是一个示例,使用元数据生成数据校验脚本:

def generate_validation_script(metadata):

database = metadata["database"]

table = metadata["table"]

columns = metadata["columns"]

script = f'-- Validation script for table {table} in database {database}\n'

for column in columns:

if not column.get("nullable", True):

script += f'SELECT * FROM {database}.{table} WHERE {column["name"]} IS NULL;\n'

if column.get("unique", False):

script += f'SELECT {column["name"]}, COUNT(*) FROM {database}.{table} GROUP BY {column["name"]} HAVING COUNT(*) > 1;\n'

return script

validation_metadata = {

"database": "example_db",

"table": "users",

"columns": [

{"name": "id", "type": "INT", "length": 11, "nullable": false, "primary_key": true},

{"name": "name", "type": "VARCHAR", "length": 255, "nullable": false},

{"name": "email", "type": "VARCHAR", "length": 255, "nullable": false, "unique": true},

{"name": "created_at", "type": "TIMESTAMP", "nullable": false}

]

}

validation_script = generate_validation_script(validation_metadata)

print(validation_script)

输出的脚本如下:

-- Validation script for table users in database example_db

SELECT * FROM example_db.users WHERE id IS NULL;

SELECT * FROM example_db.users WHERE name IS NULL;

SELECT * FROM example_db.users WHERE email IS NULL;

SELECT email, COUNT(*) FROM example_db.users GROUP BY email HAVING COUNT(*) > 1;

SELECT * FROM example_db.users WHERE created_at IS NULL;

3. 元数据驱动的数据治理

数据治理涉及数据的管理、控制和保护,以确保数据的质量、合规性和安全性。利用元数据可以显著提升数据治理的效果和效率。下面将介绍几种利用元数据进行数据治理的方式。

数据质量管理

数据质量管理是确保数据准确、完整、一致和及时的过程。元数据可以帮助我们定义和执行数据质量规则。例如,我们可以根据元数据自动生成数据质量检查脚本。

def generate_data_quality_checks(metadata):

database = metadata["database"]

table = metadata["table"]

columns = metadata["columns"]

checks = []

for column in columns:

if not column.get("nullable", True):

checks.append(f'SELECT COUNT(*) FROM {database}.{table} WHERE {column["name"]} IS NULL;')

if column.get("unique", False):

checks.append(f'SELECT {column["name"]}, COUNT(*) FROM {database}.{table} GROUP BY {column["name"]} HAVING COUNT(*) > 1;')

if column.get("type") in ["INT", "FLOAT"] and "min_value" in column:

checks.append(f'SELECT COUNT(*) FROM {database}.{table} WHERE {column["name"]} < {column["min_value"]};')

if column.get("type") in ["INT", "FLOAT"] and "max_value" in column:

checks.append(f'SELECT COUNT(*) FROM {database}.{table} WHERE {column["name"]} > {column["max_value"]};')

return checks

quality_metadata = {

"database": "example_db",

"table": "users",

"columns": [

{"name": "id", "type": "INT", "length": 11, "nullable": false, "primary_key": true},

{"name": "name", "type": "VARCHAR", "length": 255, "nullable": false},

{"name": "email", "type": "VARCHAR", "length": 255, "nullable": false, "unique": true},

{"name": "age", "type": "INT", "nullable": true, "min_value": 0, "max_value": 120},

{"name": "created_at", "type": "TIMESTAMP", "nullable": false}

]

}

quality_checks = generate_data_quality_checks(quality_metadata)

for check in quality_checks:

print(check)

输出的检查脚本如下:

SELECT COUNT(*) FROM example_db.users WHERE id IS NULL;

SELECT COUNT(*) FROM example_db.users WHERE name IS NULL;

SELECT COUNT(*) FROM example_db.users WHERE email IS NULL;

SELECT email, COUNT(*) FROM example_db.users GROUP BY email HAVING COUNT(*) > 1;

SELECT COUNT(*) FROM example_db.users WHERE age < 0;

SELECT COUNT(*) FROM example_db.users WHERE age > 120;

SELECT COUNT(*) FROM example_db.users WHERE created_at IS NULL;

数据安全与合规

数据安全与合规确保数据在存储、处理和传输过程中受到保护,遵守相关法律法规和行业标准。元数据可以帮助我们定义数据安全策略和合规要求。

def generate_security_policy(metadata):

database = metadata["database"]

table = metadata["table"]

columns = metadata["columns"]

policy = f'-- Security policy for table {table} in database {database}\n'

for column in columns:

if column.get("sensitive", False):

policy += f'ALTER TABLE {database}.{table} MODIFY {column["name"]} ENCRYPTED;\n'

return policy

security_metadata = {

"database": "example_db",

"table": "users",

"columns": [

{"name": "id", "type": "INT", "length": 11, "nullable": false, "primary_key": true},

{"name": "name", "type": "VARCHAR", "length": 255, "nullable": false},

{"name": "email", "type": "VARCHAR", "length": 255, "nullable": false, "unique": true, "sensitive": true},

{"name": "created_at", "type": "TIMESTAMP", "nullable": false}

]

}

security_policy = generate_security_policy(security_metadata)

print(security_policy)

输出的安全策略如下:

-- Security policy for table users in database example_db

ALTER TABLE example_db.users MODIFY email ENCRYPTED;

数据生命周期管理

数据生命周期管理涉及数据从创建到销毁的整个过程。元数据可以帮助我们定义数据的保留策略、归档策略和销毁策略。

def generate_lifecycle_policy(metadata):

database = metadata["database"]

table = metadata["table"]

retention_period = metadata.get("retention_period", "5 YEARS")

policy = f'-- Lifecycle policy for table {table} in database {database}\n'

policy += f'ALTER TABLE {database}.{table} SET RETENTION = {retention_period};\n'

return policy

lifecycle_metadata = {

"database": "example_db",

"table": "users",

"retention_period": "3 YEARS"

}

lifecycle_policy = generate_lifecycle_policy(lifecycle_metadata)

print(lifecycle_policy)

输出的生命周期策略如下:

-- Lifecycle policy for table users in database example_db

ALTER TABLE example_db.users SET RETENTION = 3 YEARS;

4. 元数据的自动化与工具集成

在大数据开发中,元数据的管理和应用往往需要借助自动化工具和平台来实现。通过集成各种工具,我们可以实现元数据的自动收集、存储、更新和应用,从而大大提高工作效率。下面将介绍几种常见的元数据管理工具和它们的使用方法。

元数据管理工具

Apache Atlas

Apache Atlas 是一个流行的开源元数据管理和数据治理工具。它提供了丰富的元数据管理功能,包括数据血缘、分类、标签和搜索等。

以下是一个使用 Python 与 Apache Atlas 进行元数据管理的示例:

from apache_atlas.client.base_client import AtlasClient

from apache_atlas.model.instance import AtlasEntity

# 连接到 Atlas 服务器

client = AtlasClient("http://localhost:21000", ("admin", "admin"))

# 创建元数据实体

database_entity = AtlasEntity("hive_db", {

"name": "example_db",

"qualifiedName": "example_db@cluster",

"clusterName": "cluster"

})

# 提交实体到 Atlas

client.entity_post.create_entity(database_entity)

print("Database entity created:", database_entity.guid)

Apache Hive Metastore

Apache Hive Metastore 是一个集中管理 Hive 元数据的存储系统。它包含了数据库、表、分区、列等信息。

以下是一个使用 Python 访问 Hive Metastore 的示例:

from pyhive import hive

# 连接到 Hive Metastore

conn = hive.connect(host='localhost', port=9083, username='hive')

# 查询数据库信息

cursor = conn.cursor()

cursor.execute("SHOW DATABASES")

databases = cursor.fetchall()

for db in databases:

print(db)

Apache Airflow

Apache Airflow 是一个开源的工作流调度器,常用于数据管道的自动化。通过集成元数据管理工具,我们可以实现元数据驱动的任务调度。

以下是一个使用 Airflow 管理数据管道的示例:

from airflow import DAG

from airflow.operators.bash import BashOperator

from datetime import datetime

# 定义 DAG

dag = DAG(

'metadata_pipeline',

default_args={

'owner': 'airflow',

'start_date': datetime(2023, 1, 1),

'retries': 1,

},

schedule_interval='@daily',

)

# 定义任务

t1 = BashOperator(

task_id='extract_metadata',

bash_command='python extract_metadata.py',

dag=dag,

)

t2 = BashOperator(

task_id='process_data',

bash_command='python process_data.py',

dag=dag,

)

# 设置任务依赖

t1 >> t2

集成元数据管理与数据管道

通过将元数据管理工具与数据管道相集成,我们可以实现数据管道的自动化和智能化。例如,可以通过元数据来动态生成数据管道的任务,或根据元数据的变化来触发数据管道的运行。

以下是一个简单的示例,展示了如何通过元数据动态生成数据管道任务:

from airflow import DAG

from airflow.operators.bash import BashOperator

from datetime import datetime

import json

# 定义 DAG

dag = DAG(

'dynamic_pipeline',

default_args={

'owner': 'airflow',

'start_date': datetime(2023, 1, 1),

'retries': 1,

},

schedule_interval='@daily',

)

# 从元数据中读取任务配置

with open('metadata.json', 'r') as f:

metadata = json.load(f)

# 动态生成任务

previous_task = None

for task_metadata in metadata['tasks']:

task = BashOperator(

task_id=task_metadata['task_id'],

bash_command=task_metadata['bash_command'],

dag=dag,

)

if previous_task:

previous_task >> task

previous_task = task

示例元数据文件(metadata.json)

{

"tasks": [

{

"task_id": "extract_metadata",

"bash_command": "python extract_metadata.py"

},

{

"task_id": "process_data",

"bash_command": "python process_data.py"

},

{

"task_id": "load_data",

"bash_command": "python load_data.py"

}

]

}

5. 元数据的高级应用

在大数据开发中,元数据不仅仅用于基础的数据管理任务,还可以支持许多高级应用,例如数据血缘分析、影响分析和数据编目等。通过对元数据的深度挖掘,我们可以获得更多的数据洞察,提高数据的可用性和可靠性。

数据血缘分析

数据血缘分析是指追踪数据从源头到目标的流动路径,了解数据在各个阶段的变换和处理过程。通过元数据,我们可以自动构建数据血缘图,帮助我们理解数据的来源和去向。

以下是一个简单的数据血缘分析示例,使用 Python 构建数据血缘图:

import networkx as nx

import matplotlib.pyplot as plt

def build_lineage(metadata):

G = nx.DiGraph()

for table in metadata["tables"]:

G.add_node(table["name"], type="table")

for column in table["columns"]:

column_node = f'{table["name"]}.{column["name"]}'

G.add_node(column_node, type="column")

G.add_edge(table["name"], column_node)

if "source_columns" in column:

for source_column in column["source_columns"]:

G.add_edge(source_column, column_node)

return G

def plot_lineage(G):

pos = nx.spring_layout(G)

labels = {node: node for node in G.nodes()}

nx.draw(G, pos, labels=labels, with_labels=True, node_size=3000, node_color="lightblue", font_size=10, font_weight="bold", arrows=True)

plt.show()

lineage_metadata = {

"tables": [

{

"name": "orders",

"columns": [

{"name": "order_id", "type": "INT"},

{"name": "user_id", "type": "INT"},

{"name": "total_amount", "type": "FLOAT", "source_columns": ["order_items.amount"]},

{"name": "created_at", "type": "TIMESTAMP"}

]

},

{

"name": "order_items",

"columns": [

{"name": "order_id", "type": "INT"},

{"name": "item_id", "type": "INT"},

{"name": "amount", "type": "FLOAT"}

]

}

]

}

G = build_lineage(lineage_metadata)

plot_lineage(G)

影响分析

影响分析是指评估某个数据变更对系统其他部分的影响。通过元数据,我们可以了解数据表和字段之间的依赖关系,从而分析变更的影响范围。

以下是一个简单的影响分析示例,使用 Python 评估表和字段之间的依赖关系:

def impact_analysis(metadata, target_table):

impacted_tables = set()

impacted_columns = set()

for table in metadata["tables"]:

for column in table["columns"]:

if "source_columns" in column:

for source_column in column["source_columns"]:

if source_column.startswith(target_table):

impacted_tables.add(table["name"])

impacted_columns.add(f'{table["name"]}.{column["name"]}')

return impacted_tables, impacted_columns

impact_metadata = {

"tables": [

{

"name": "orders",

"columns": [

{"name": "order_id", "type": "INT"},

{"name": "user_id", "type": "INT"},

{"name": "total_amount", "type": "FLOAT", "source_columns": ["order_items.amount"]},

{"name": "created_at", "type": "TIMESTAMP"}

]

},

{

"name": "order_items",

"columns": [

{"name": "order_id", "type": "INT"},

{"name": "item_id", "type": "INT"},

{"name": "amount", "type": "FLOAT"}

]

}

]

}

target_table = "order_items"

impacted_tables, impacted_columns = impact_analysis(impact_metadata, target_table)

print("Impacted tables:", impacted_tables)

print("Impacted columns:", impacted_columns)

数据编目

数据编目是指为数据资产创建详细的目录,方便用户查找和理解数据。通过元数据,我们可以自动生成数据目录,包括表结构、字段描述、数据类型等信息。

以下是一个简单的数据编目示例,使用 Python 生成数据目录:

def generate_data_catalog(metadata):

catalog = {}

for table in metadata["tables"]:

table_catalog = {

"columns": []

}

for column in table["columns"]:

column_catalog = {

"name": column["name"],

"type": column["type"]

}

if "length" in column:

column_catalog["length"] = column["length"]

if "nullable" in column:

column_catalog["nullable"] = column["nullable"]

table_catalog["columns"].append(column_catalog)

catalog[table["name"]] = table_catalog

return catalog

catalog_metadata = {

"tables": [

{

"name": "users",

"columns": [

{"name": "id", "type": "INT", "length": 11, "nullable": false},

{"name": "name", "type": "VARCHAR", "length": 255, "nullable": false},

{"name": "email", "type": "VARCHAR", "length": 255, "nullable": false, "unique": true},

{"name": "created_at", "type": "TIMESTAMP", "nullable": false}

]

},

{

"name": "orders",

"columns": [

{"name": "order_id", "type": "INT", "length": 11, "nullable": false},

{"name": "user_id", "type": "INT", "length": 11, "nullable": false},

{"name": "total_amount", "type": "FLOAT", "nullable": false},

{"name": "created_at", "type": "TIMESTAMP", "nullable": false}

]

}

]

}

catalog = generate_data_catalog(catalog_metadata)

print(catalog)

输出的目录如下:

{

"users": {

"columns": [

{"name": "id", "type": "INT", "length": 11, "nullable": false},

{"name": "name", "type": "VARCHAR", "length": 255, "nullable": false},

{"name": "email", "type": "VARCHAR", "length": 255, "nullable": false, "unique": true},

{"name": "created_at", "type": "TIMESTAMP", "nullable": false}

]

},

"orders": {

"columns": [

{"name": "order_id", "type": "INT", "length": 11, "nullable": false},

{"name": "user_id", "type": "INT", "length": 11, "nullable": false},

{"name": "total_amount", "type": "FLOAT", "nullable": false},

{"name": "created_at", "type": "TIMESTAMP", "nullable": false}

]

}

}

总结:

在大数据开发中,元数据扮演着至关重要的角色,从基础的数据建模到复杂的数据治理任务,元数据都能显著提高工作效率和数据质量。本文详细介绍了元数据的概念及其在数据建表、数据迁移、数据同步和数据校验中的应用。通过代码示例,展示了如何收集元数据并生成相应的SQL脚本以自动化这些任务。

此外,本文还探讨了元数据在高级应用中的潜力,包括数据血缘分析、影响分析和数据编目。通过元数据驱动的工具和平台,如Apache Atlas、Hive Metastore和Apache Airflow,我们可以实现元数据的自动管理和深度挖掘,进一步提升数据治理的效果。

希望这篇博客文章能为你在大数据开发中的元数据管理提供实用的指导和启发。如果你有任何问题或需要进一步的解释,请随时留言。期待你的反馈和讨论。

原文地址:https://blog.csdn.net/u012955829/article/details/140401952

免责声明:本站文章内容转载自网络资源,如本站内容侵犯了原著者的合法权益,可联系本站删除。更多内容请关注自学内容网(zxcms.com)!