softmax从零开始实现

代码

import numpy as np

import torch

import torchvision

import torchvision.transforms as transforms

from torch.utils import data

# H,W,C -> C,H,W

mnist_train = torchvision.datasets.FashionMNIST(root="./data", train=True, download=True,

transform=transforms.ToTensor())

mnist_test = torchvision.datasets.FashionMNIST(root="./data", train=False, download=True,

transform=transforms.ToTensor())

batch_size = 256

# 随机读取⼩批量

train_loader = data.DataLoader(mnist_train, batch_size, shuffle=True)

test_loader = data.DataLoader(mnist_test, batch_size, shuffle=True)

# feature, label = mnist_train[0]

# print(feature.shape, label) # torch.Size([1, 28, 28]) 9

num_inputs = 784

num_outputs = 10

def softmax(X):

X_exp = X.exp()

partition = X_exp.sum(dim=1, keepdim=True) # 按行

return X_exp / partition # 这⾥应⽤了⼴播机制

def net(X):

return softmax(torch.mm(X.view((-1, num_inputs)), W) + b)

def cross_entropy(y_hat, y):

return - torch.log(y_hat.gather(1, y.view(-1, 1)))

def sgd(params, lr, batch_size):

for param in params:

param.data -= lr * param.grad / batch_size # 注意这⾥更改param时⽤的param.data

def accuracy(y_hat, y):

return (y_hat.argmax(dim=1) == y).float().mean().item()

W = torch.tensor(np.random.normal(0, 0.01, (num_inputs, num_outputs)), dtype=torch.float)

b = torch.zeros(num_outputs, dtype=torch.float)

W.requires_grad_()

b.requires_grad_()

num_epochs, lr = 10, 0.1

loss = cross_entropy

optimizer = sgd

for epoch in range(1, 1 + num_epochs):

total_loss = 0.0

train_sample = 0.0

train_acc_sum = 0

for x, y in train_loader:

y_hat = net(x)

l = loss(y_hat, y) # 256,1

# 梯度清零

l.sum().backward()

sgd([W, b], lr, batch_size) # 使用参数的梯度更新参数

W.grad.data.zero_()

b.grad.data.zero_()

total_loss += l.sum().item()

train_sample += y.shape[0]

train_acc_sum += (y_hat.argmax(dim=1) == y).sum().item()

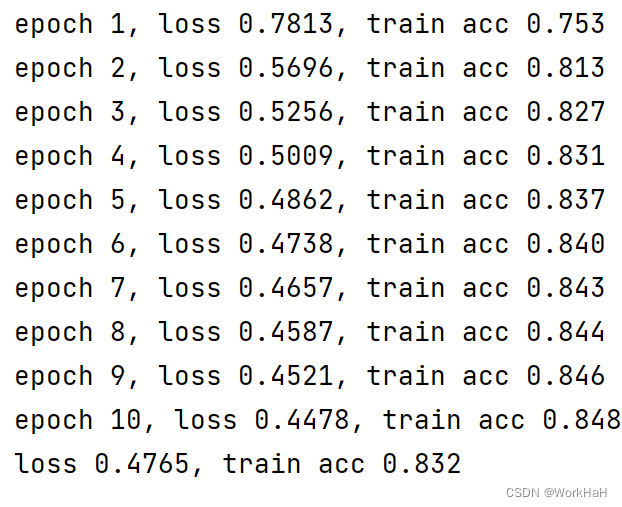

print('epoch %d, loss %.4f, train acc %.3f' % (epoch, total_loss / train_sample, train_acc_sum / train_sample,))

with torch.no_grad():

total_loss = 0.0

test_sample = 0.0

test_acc_sum = 0

for x, y in test_loader:

y_hat = net(x)

l = loss(y_hat, y) # 256,1

total_loss += l.sum().item()

test_sample += y.shape[0]

test_acc_sum += (y_hat.argmax(dim=1) == y).sum().item()

print('loss %.4f, test acc %.3f' % (total_loss / test_sample, test_acc_sum / test_sample,))

结果

原文地址:https://blog.csdn.net/weixin_45920385/article/details/140157697

免责声明:本站文章内容转载自网络资源,如本站内容侵犯了原著者的合法权益,可联系本站删除。更多内容请关注自学内容网(zxcms.com)!